Case Study

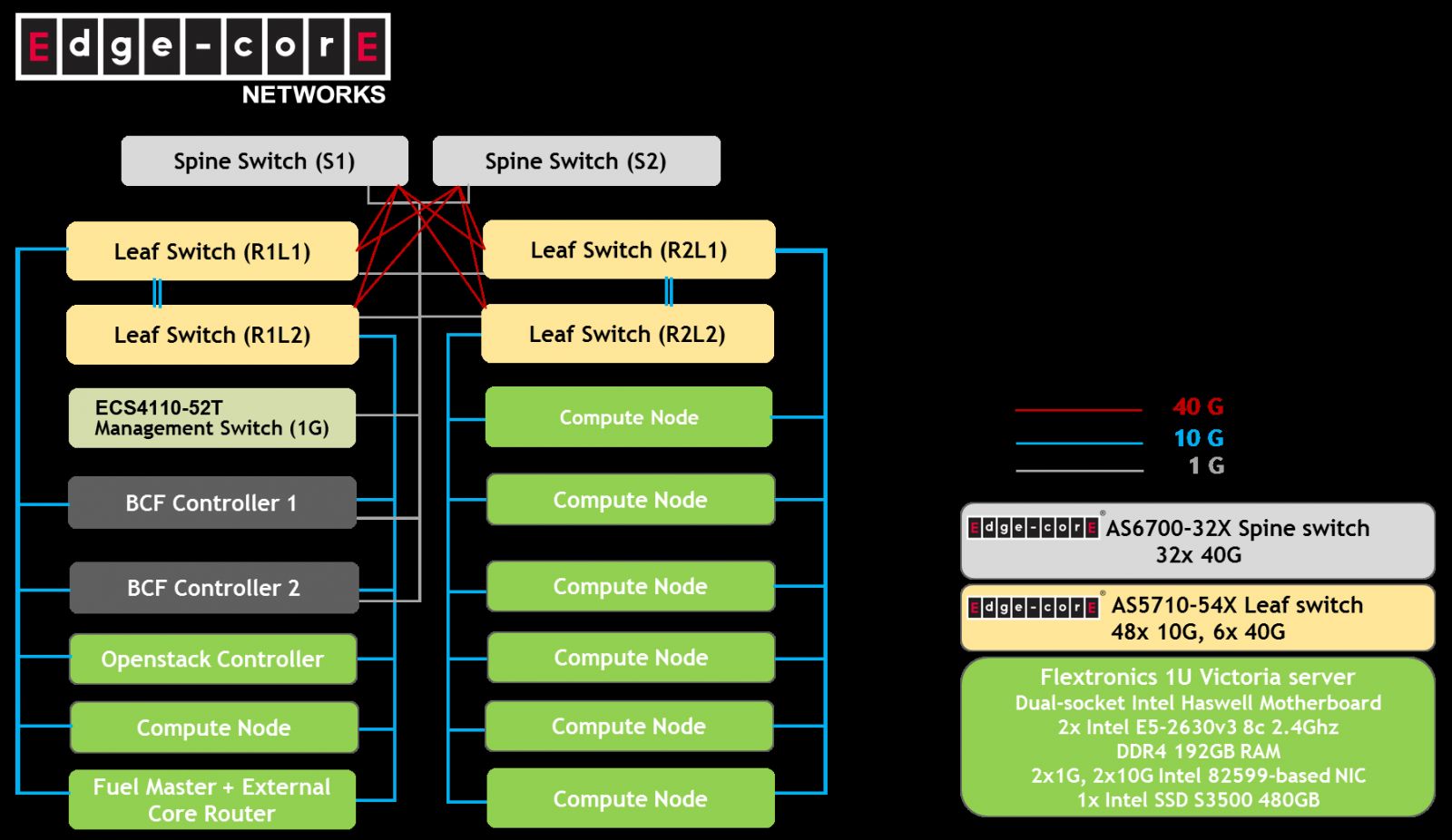

Edgecore Networks' open networking data center switches – two

AS6700-32X spine switches and four

AS5710-54X leaf switches – are used in ClouldLabs, running Big Switch Networks' Big Cloud Fabric (BCF) as the validation with OpenStack in 2015. This project validated the ease of deployment and full functionality of the BCF P+V solution in a customer lab environment with Edgecore's open networking TOR and spine switches.

Background

CloudLabs is an initiative within Ciii chartered with streamlining the efforts to service its customers' cloud related needs. With the emergence of scale-out computing workloads for e-commerce, big data analytics, and private cloud, Ciii customers are demanding integrated solutions encompassing rack-scale hardware with integrated software stack. In many cases, the complete stack is a converged infrastructure that includes optimized hardware with software architectures that solve specific workload requirements around speed, performance, power, and cost. The trend of disaggregation and open source software proliferation has led to the emergence of new scale-out blueprints over the last decade that has dramatically changed the infrastructure landscape.

CloudLabs provides engineering and design services to optimize such rack level solutions with emphasis on multivendor equipment integration. CloudLabs enables customers to accelerate a spectrum of cloud, converged infrastructure, and data center strategies. For this purpose, CloudLabs have created a customer showcase staging area running a live multi-rack cloud instance on hardware developed within Flex. This staging lab facilitates our customers and partners to deploy the latest technologies and allow for faster diffusion of technologies in a controlled environment, identifying value propositions for the end customer. It also provides our customers access to a sandbox environment that can be leveraged to test the performance of their workloads and evaluate the solution capabilities.

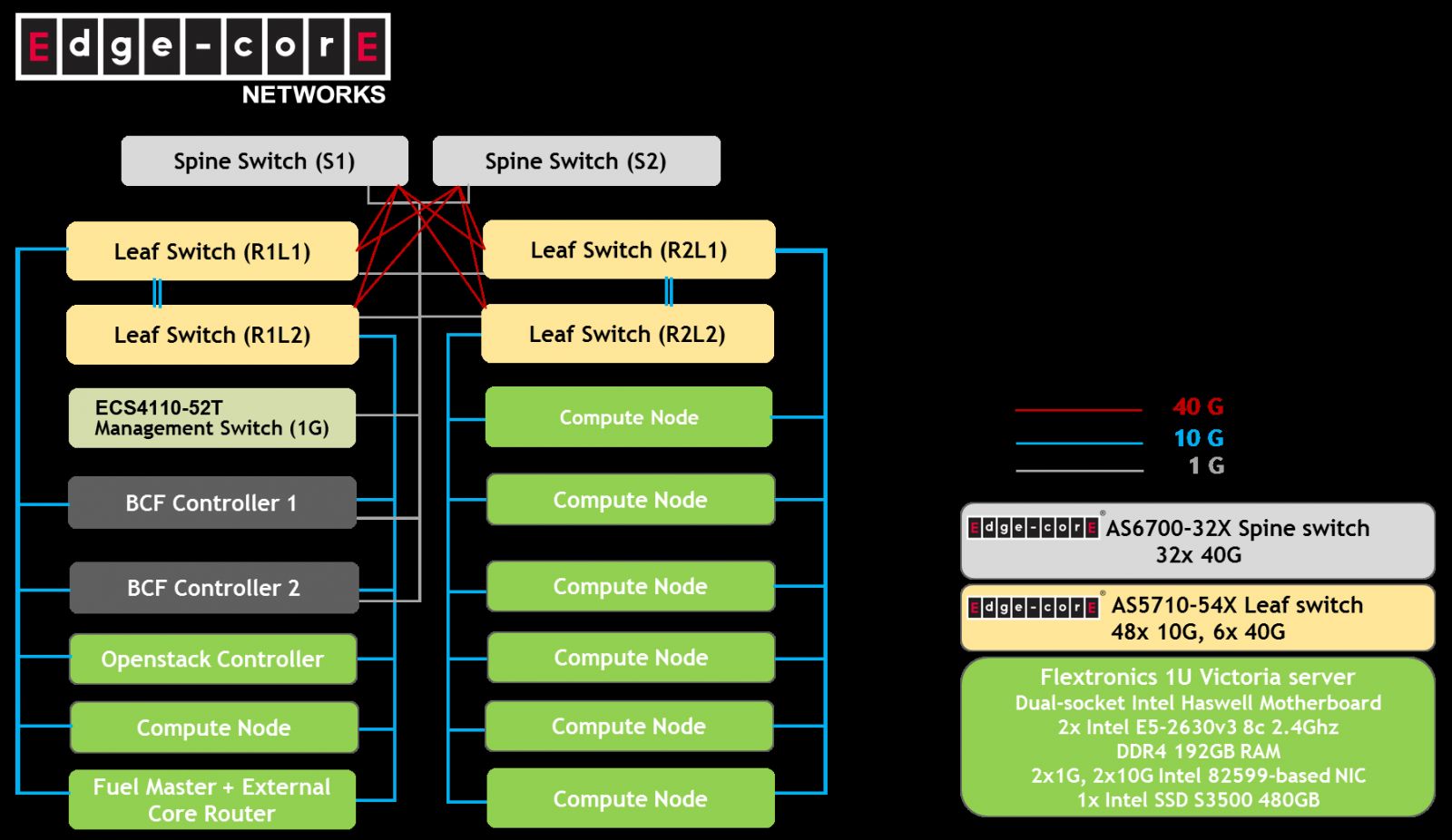

Application Diagram / Lab Environment

Clos Network Topology

The network topology deployed at CloudLabs is a leaf-spine topology, where all devices are exactly the same number of segments away from each other, and contain a predictable and consistent amount of latency. Every physical leaf switch is interconnected with every spine switch (full-mesh). This demonstrates the Clos network topology. Leaf switches in the same rack are connected together with two links (10-GbE ports) to create a leaf group. Dual links increase the available bandwidth and provide link-level redundancy. The 10-GbE dual-bonded links from the compute nodes are connected to both ToR leaf switches in the same rack. The 10-GbE dual-bonded links from the Big Cloud Fabric Controller nodes are connected to both ToR leaf switches in the same rack for vSwitch management.

Why Edgecore Networks

As the first OCP-approved 10G/40G switches, the Edgecore bare-metal switches,

AS5710-54X and

AS6700-32X, with their pre-loaded ONIE (Open Network Installation Environment) enable different network operating systems to easily run on top of the Edgecore hardware, while

ECS4110-52T 48-port layer 2 GE management switches run as administration switches for BCF controllers and Leaf/Spine switches.

Models used in the case

2 units

AS6700-32X 40GbE data center switch with 32 QSFP GE RJ-45 ports, 4x10G SFP+ ports, and 2 QSFP ports, serving as spine switches with 40G downlinks to leaf switches.

4 units

AS5710-54X 10GbE data center switch with 48 10G SFP+ ports, and 6 x 40G QSFP+ uplink ports, serving as leaf switches with uplinks to spine switches and downlinks to controllers and computer nodes.

1 unit

ECS4110-52T 48-port GE L2 switch as a management switch

For more details on the Ciii project, please click

here to downlaod the PDF, or refer to Big Switch Networks website:

http://go.bigswitch.com/rs/974-WXR-561/images/Flextronics_Ciii_WP_BCF_PV_Final.pdf